Results

Input, background inpainting results, and results of the proposed object inpainting method.

Input, background inpainting results, and results of the proposed object inpainting method.

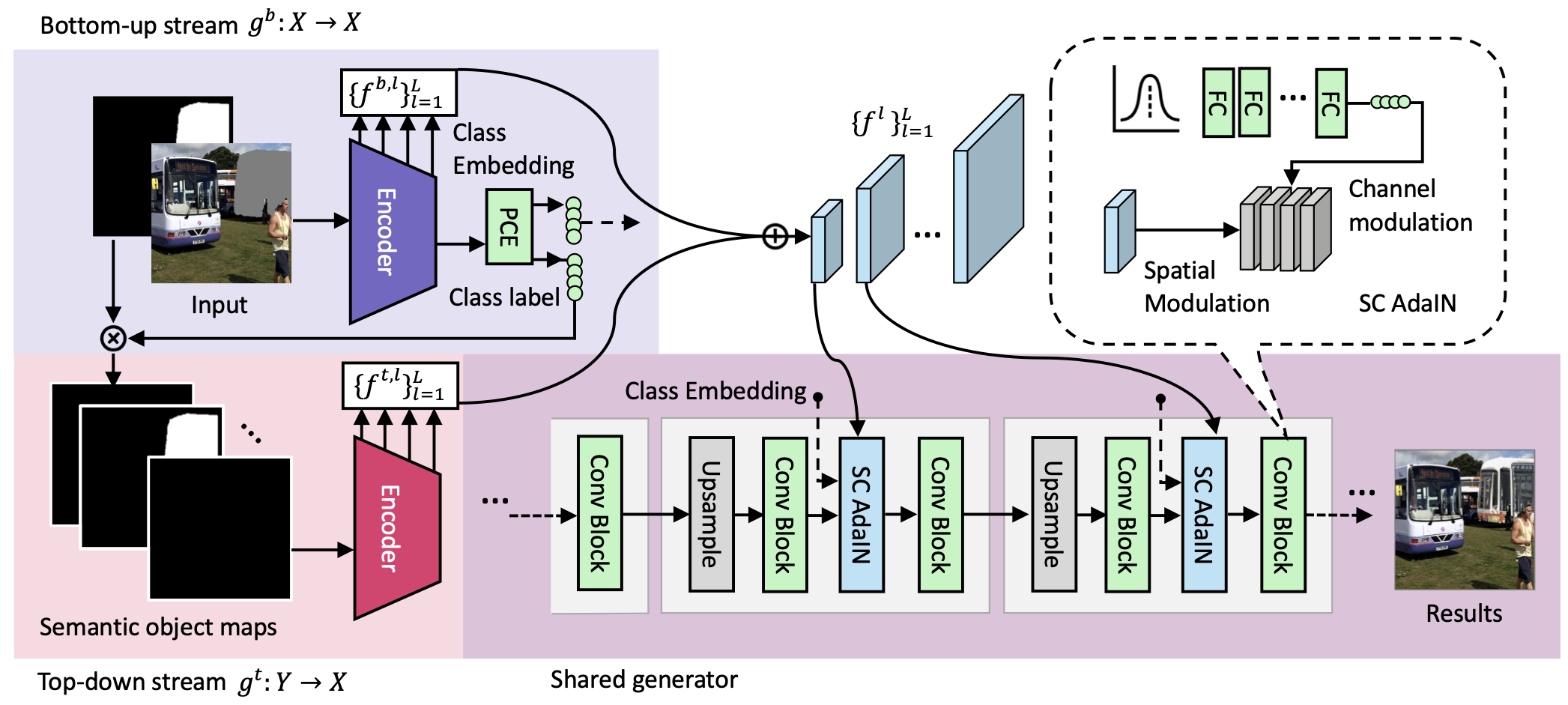

Overview of the proposed contextual object generation framework.

@article{zeng2022shape,

title={Shape-guided Object Inpainting},

author={Zeng, Yu and Lin, Zhe and Patel, Vishal M},

journal={},

year={2022}

}